When part of your cloud bill is untagged (for example, a shared API gateway or a platform service used by multiple teams) you can’t attribute costs using tags alone. Without proper , these shared costs end up in an “other” bucket, making chargeback or showback impossible. This guide shows you how to split shared API costs proportionally by importing an external usage metric (such as API call counts per team) into Costory and using it to build a reusable that automatically attributes the right share of cost to each team.Documentation Index

Fetch the complete documentation index at: https://docs.costory.io/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

- You have identified the total cost of this API in your billing data.

- You have a usage metric from your internal monitoring tool (e.g., API call counts per team) that can serve as an allocation key to split costs across callers.

| date | team | region | api_call_count |

|---|---|---|---|

| 2025-12-01 00:00:00 | Team_A | North | 1081 |

| 2025-12-01 00:00:00 | Team_A | South | 1330 |

| 2025-12-01 00:00:00 | Team_A | East | 1201 |

| 2025-12-01 00:00:00 | Team_A | West | 505 |

| 2025-12-01 00:00:00 | Team_B | North | 983 |

What you get: per-team cost attribution

- A per-team cost view based on actual API usage, ready for chargeback or showback.

- A reusable that automatically allocates the shared cost going forward, with no manual updates needed.

How it works

The overall flow is: export a usage metric from your monitoring system, import it into Costory, preview the proportional split, then lock it in with a Virtual Dimension.How to split shared API costs step by step

Schedule the metric export

Create a scheduled job (cron, Airflow, GitHub Actions, or any orchestrator) that writes the metric file to the bucket every day. The job should:

- Output format: A Parquet file with one row per combination of dimensions per day. At minimum, include a

datecolumn, one or more dimension columns (e.g.,team,region), and a numeric value column (e.g.,api_call_count). - S3 prefix: Write files under a consistent prefix, for example

s3://<bucket>/api_cost_allocations/. Costory scans this prefix for new files. - Overwrite strategy: You can safely write a new file each day. Costory applies an incremental overwrite strategy: for a given day, the last data received wins, and existing data for other days is preserved.

Create the metric in Costory

- Terraform

- Manual

The metric will take a few minutes to be ingested. Once ready, you can see it and its values in Costory.

Preview the reallocation

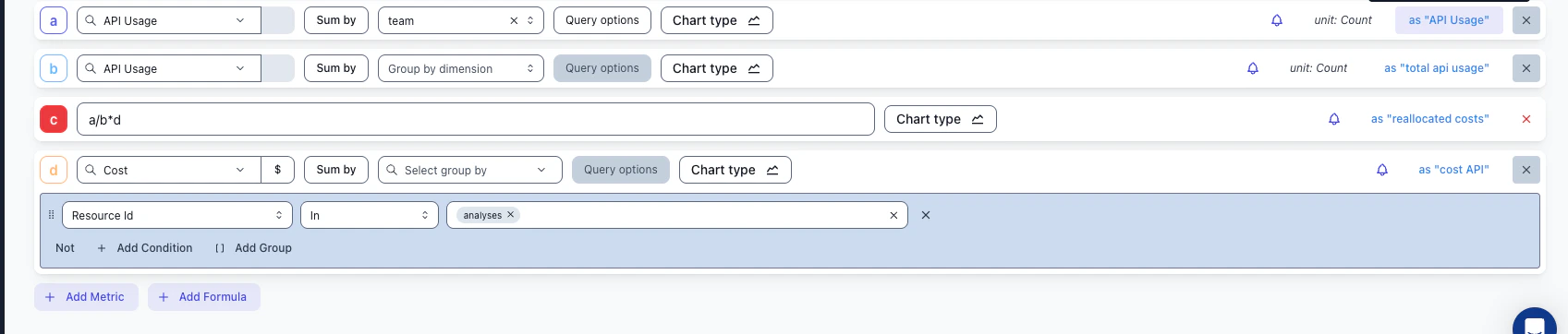

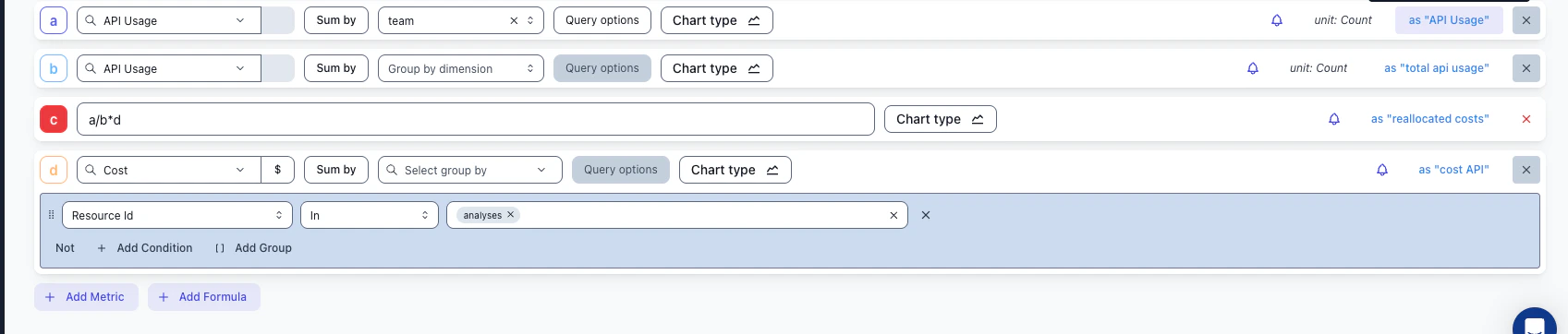

Use the to preview how the reallocated costs would look before committing to a Virtual Dimension.The idea is to build a formula that computes each team’s proportional share of the total API cost:

The formula

| Row | What it represents | Example |

|---|---|---|

| a | API Usage metric, grouped by team | Team A: 4 117 calls, Team B: 983 calls |

| b | Total API usage across all teams | 5 100 calls |

| c | The reallocation formula: a / b * d | Team A gets 80.7% of the cost |

| d | Total cost of the shared API resource | $1 000 |

a / b * d gives you: (team’s calls / total calls) * total cost = team’s share.

Create the Virtual Dimension

Create a Virtual Dimension that automatically allocates the shared cost going forward.

- Add a new rule for those API costs:

- Rely on reallocation based on usage metrics

- Use the metric you just imported

- Choose an allocation strategy:

- Identity mapping: The new label value is the team name

- Regex mapping: The API name contains the team name (extract it with a regex)

- Manual mapping: The API name has no relation to the team name; map each name manually

- Save the virtual dimension

Next steps: automate and share

- Set up a weekly Slack report to share the reallocated costs with each team automatically.

- Explore the results in the Cost Explorer to validate cost attribution per team.

- Build on this allocation to create a budget tracking workflow per team.